AWS EMR – Private Subnets

Introduction

In this guide, we’ll walk through creating an Amazon EMR cluster in a private subnet using Terraform.

By placing your EMR cluster in a private subnet, you prevent it from being directly accessible from the internet — improving both security and compliance posture.

👉 View the complete code on GitHub

Prerequisites

Before proceeding, make sure you have:

An AWS account with permissions to create resources

Terraform installed on your local machine

Step 1: Setting Up the Terraform Configuration

Create a new Terraform configuration file (emr_cluster.tf) and define the AWS provider:

provider "aws" {

profile = "terraform"

region = var.region

}

terraform {

required_providers {

aws = {

source = "hashicorp/aws"

version = "~> 5.42.0"

}

}

}

Here, we configure the AWS provider to use the “terraform” profile and region defined in

var.region.

Step 2: Declaring Data Sources

Fetch dynamic data from AWS — for example, available Availability Zones:

data "aws_availability_zones" "available" {}

Step 3: Defining Local Variables

Define reusable local variables:

locals {

name = replace(basename(path.cwd), "-cluster", "")

vpc_name = "MyVPC1"

vpc_cidr = "10.0.0.0/16"

bucket_name = "slvr-emr-bucket-443"

azs = slice(data.aws_availability_zones.available.names, 0, 3)

}

azstakes the first 3 availability zones from your AWS region.

Step 4: Configuring the EMR Module

Define the EMR module to launch your cluster:

module "emr_instance_group" {

source = "terraform-aws-modules/emr/aws"

version = "~> 1.2.1"

name = "${local.name}-instance-group"

release_label_filters = {

emr6 = { prefix = "emr-6" }

}

applications = ["spark", "hadoop"]

auto_termination_policy = { idle_timeout = 3600 }

bootstrap_action = {

example = {

path = "file:/bin/echo"

name = "Just an example"

args = ["Hello World!"]

}

}

configurations_json = jsonencode([

{

"Classification" : "spark-env",

"Configurations" : [

{

"Classification" : "export",

"Properties" : { "JAVA_HOME" : "/usr/lib/jvm/java-1.8.0" }

}

],

"Properties" : {}

}

])

master_instance_group = {

name = "master-group"

instance_count = 1

instance_type = "m5.xlarge"

bid_price = "0.25"

}

core_instance_group = {

name = "core-group"

instance_count = 1

instance_type = "c4.2xlarge"

bid_price = "0.25"

}

ebs_root_volume_size = 64

ec2_attributes = {

subnet_id = element(module.vpc.private_subnets, 0)

key_name = "Login-1"

}

vpc_id = module.vpc.vpc_id

is_private_cluster = true

master_security_group_rules = {

"rule1" = {

description = "Allow SSH ingress"

type = "ingress"

from_port = 22

to_port = 22

protocol = "tcp"

cidr_blocks = ["0.0.0.0/0"]

},

"rule2" = {

description = "Allow all egress traffic"

type = "egress"

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

}

keep_job_flow_alive_when_no_steps = true

log_uri = "s3://${module.s3_bucket.s3_bucket_id}/"

step_concurrency_level = 3

termination_protection = false

visible_to_all_users = true

tags = var.tags

depends_on = [module.vpc, module.s3_bucket]

}

Notes:

Instance Groups = Same instance type for master/core nodes.

Instance Fleets = Mix of types (with spot support) → more cost-effective.

Instance Groups launch in one private subnet; Fleets can span multiple.

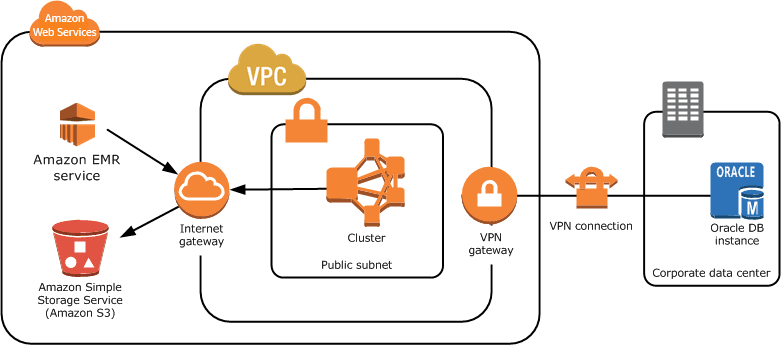

Step 5: Configuring the VPC

Define your VPC and subnet layout:

module "vpc" {

source = "terraform-aws-modules/vpc/aws"

name = local.vpc_name

cidr = local.vpc_cidr

azs = local.azs

public_subnets = [for k, v in local.azs : cidrsubnet(local.vpc_cidr, 8, k)]

private_subnets = [for k, v in local.azs : cidrsubnet(local.vpc_cidr, 8, k + 8)]

enable_nat_gateway = true

single_nat_gateway = true

default_vpc_enable_dns_hostnames = true

default_vpc_enable_dns_support = true

private_subnet_tags = {

"for-use-with-amazon-emr-managed-policies" = true

}

tags = var.tags

vpc_tags = { Name = local.vpc_name }

}

NAT Gateway is critical — allows private subnets to reach the internet (e.g., for S3 access).

Step 6: Configuring VPC Endpoints

Add private network access to AWS services:

module "vpc_endpoint" {

source = "./.terraform/modules/vpc/modules/vpc-endpoints"

vpc_id = module.vpc.vpc_id

security_group_ids = [module.vpc_endpoints_sg.security_group_id]

endpoints = merge(

{

s3 = {

service = "s3"

service_type = "Gateway"

private_dns_enabled = true

route_table_ids = flatten([module.vpc.private_route_table_ids])

policy = data.aws_iam_policy_document.generic_s3_policy.json

tags = { Name = "${local.vpc_name}-s3" }

}

},

{

for service in toset(["elasticmapreduce", "sts"]) :

service => {

service = service

service_type = "Interface"

subnet_ids = module.vpc.private_subnets

private_dns_enabled = true

tags = { Name = "${local.vpc_name}-${service}" }

}

}

)

tags = var.tags

depends_on = [module.vpc, module.vpc_endpoints_sg]

}

These endpoints allow EMR to reach S3, EMR API, and STS privately, without Internet Gateway access.

Step 7: Configuring the S3 Bucket

Set up a dedicated bucket for EMR logs and scripts:

module "s3_bucket" {

source = "terraform-aws-modules/s3-bucket/aws"

version = "~> 3.0"

bucket = local.bucket_name

# Additional configurations like encryption can go here

}

Step 8: Deploying the Configuration

Once everything is set up:

terraform init

terraform plan

terraform apply

Confirm with yes when prompted.

This will:

Create your VPC, subnets, endpoints, and S3 bucket

Launch an EMR cluster inside your private subnet

Conclusion

You’ve successfully deployed an Amazon EMR cluster inside a private subnet using Terraform 🎯

This setup:

Ensures EMR operates without public exposure

Uses VPC endpoints for S3 & AWS APIs

Can be extended for cost optimization (spot fleets, autoscaling)

📘 Official AWS Reference:

Creating EMR clusters in a VPC (AWS Docs)