Apache Hadoop — Background

Technologies come and go, but data is here to grow!

Introduction

Data is the core of every modern application, driving insights, automation, and user experiences. Over time, technologies have evolved to collect, process, and analyze this data more efficiently.

Here’s a quick historical view of that evolution:

Excel

Oracle SQL, Microsoft SQL Server

Hadoop

Spark

Each stage emerged to overcome limitations of its predecessors — particularly in scale, performance, and flexibility.

The Big Data Problem

With the rise of the internet, smartphones, and IoT, data exploded in both volume and variety.

We began dealing with massive datasets in formats like JSON, XML, DOC, MP4, and Avro — data streaming in at high velocity from diverse sources.

Traditional RDBMS systems struggled because they weren’t designed for:

Volume – petabytes and beyond

Variety – structured + unstructured

Velocity – real-time and streaming workloads

These three challenges together form what we call the 3 Vs of Big Data.

Despite decades of improvements, traditional databases were not built to handle these scale problems efficiently — leading to the rise of distributed computing.

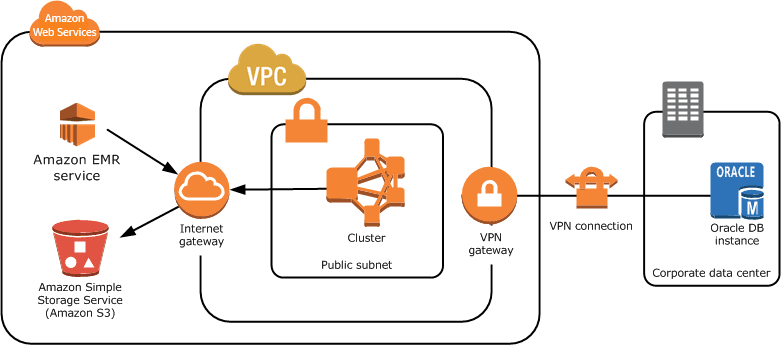

Distributed Computing

To solve challenges around scalability, availability, and cost, distributed systems like Apache Hadoop and Apache Spark emerged.

They distribute data across clusters of machines and process them in parallel, allowing:

High throughput

Fault tolerance

Linear scalability

Key Features of Distributed Systems

Scalability — Handle growing data volumes easily.

High Availability — Tolerate node failures without losing data.

Cost Efficiency — Scale horizontally using commodity hardware.

Among these, Apache Spark later gained traction due to its in-memory computation and ease of use — but Hadoop laid the foundation.

Basic Background: Hadoop

At its core, Hadoop consists of three major layers:

YARN - Cluster resource manager

HDFS - Distributed storage layer

MapReduce - Computation framework

YARN acts as the operating system of the cluster, managing resources and scheduling jobs.

HDFS stores data in blocks across nodes, ensuring replication and fault tolerance.

MapReduce provides a computation framework for processing data in parallel using Java-based programs.

You can think of Hadoop as a distributed operating system for big data — simplifying how you work with clusters of machines.

Modern engines like Spark now build on this foundation while abstracting away much of the complexity.

Hadoop Ecosystem

While Hadoop originally consisted of HDFS, YARN, and MapReduce, a broader ecosystem has evolved around it.

| Component | Purpose |

|---|---|

| Hive | SQL-like data warehousing on Hadoop |

| HBase | NoSQL database for large datasets |

| Sqoop | Transfer data between RDBMS and Hadoop |

| Oozie | Workflow scheduler for Hadoop jobs |

These tools extend Hadoop’s capabilities, turning it into a complete big data platform.

Hadoop Architecture

To understand Hadoop deeply, you need to know its three main subsystems:

HDFS (Storage Layer)

YARN (Resource Management Layer)

MapReduce (Computation Layer)

Hadoop runs on clusters of commodity hardware. Each component runs as a Java daemon process — a long-running background service.

Let’s break this down starting with HDFS.

HDFS — Hadoop Distributed File System

HDFS was inspired by Google’s GFS (Google File System).

It allows reliable storage and access to very large datasets using a master-slave architecture.

Key Components

1. NameNode (Master)

Runs on the master node.

Stores metadata about files — directories, block locations, and replication info.

Think of it as the librarian that knows where every book (data block) is stored.

2. DataNode (Worker)

Stores the actual data blocks.

Each file is split into blocks (default size: 128 MB) which are replicated across nodes for reliability.

Example — Metadata in NameNode

When you store a 1GB file, it’s split into 8 blocks (assuming 128MB each). The NameNode keeps metadata like:

FILE: example.txt

DIRECTORY: /data

SIZE: 1GB

BLOCKS:

- BLOCK1: ID=blk_001, LOCATION=datanode1:/disk1/data/block1

- BLOCK2: ID=blk_002, LOCATION=datanode2:/disk1/data/block2

...

You can view file metadata using:

hdfs fsck hdfs://<file-path> -location -blocks -files

How Reading from HDFS Works

Client sends read request → NameNode

NameNode returns block locations

Client fetches blocks directly from DataNodes

Client reassembles blocks into the full file

How Writing to HDFS Works

Client sends write request → NameNode

NameNode responds with target DataNodes for each block

Client splits file into blocks and writes sequentially

DataNodes send acknowledgment back to NameNode

NameNode updates metadata once all blocks are written

Common HDFS Commands

| Action | Command |

|---|---|

| List files | hdfs dfs -ls <path> |

| Copy to HDFS | hdfs dfs -copyFromLocal <local> <hdfs> |

| Change permissions | hdfs dfs -chmod 777 <path> |

| Rename file | hdfs dfs -mv <src> <dest> |

| View contents | hdfs dfs -cat <file> |

| Check disk usage | hdfs dfs -du -s -h /user/test |

Hadoop YARN

Once the data is stored in HDFS, it needs to be processed.

This is where YARN (Yet Another Resource Negotiator) comes in — it manages resources across the cluster.

Like HDFS, YARN also follows a master-slave architecture:

| Component | Role |

|---|---|

| ResourceManager | Manages overall cluster resources |

| NodeManager | Runs on each worker node, reporting usage and launching containers |

YARN decouples resource management from computation, enabling multiple frameworks (e.g., MapReduce, Spark, Hive) to run simultaneously on the same cluster.

Summary

Hadoop revolutionized big data processing by introducing:

Distributed storage (HDFS)

Cluster resource management (YARN)

Parallel computation (MapReduce)

It laid the foundation for modern data platforms and continues to influence technologies like Spark, Flink, and Databricks.